S2. Parameters, Statistics and estimation Flashcards

(26 cards)

Define parameter

Numerical measure that describes a specific characteristic.

Define statistic

Numerical measure that describes a specific characteristic of a sample

‘Function of a random variable.’

Define estimand

The parameter in the population which is to be estimated in a statistical analysis.

Define estimator

A function for calculating an estimate of a given population parameter based on randomly sampled data.

Define estimate

The numerical value of the estimator given a specific sample is drawn; a non-random number.

Notation for population parameters and sample estimators

Equations for mean (population parameter and sample estimator)

Equations for variance (population parameter and sample estimator)

Equations for variance (binary) (population parameter and sample estimator)

Equations for standard deviation (population parameter and sample estimator)

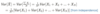

Equations for covariance (population parameter and sample estimator)

Equations for covariance (binary) (population parameter and sample estimator)

Equations for correlation (population parameter and sample estimator)

Characteristics of an estimator

- Statistic, hence subject to sampling variation, therefore

- it has a distribution (with PMF, PDF, CDF) called a ‘sampling distribution.’

- This sampling distribution has an expected value and variance too.

Three properties of a good estimator

- Unbiasedness

- Efficiency

- Consistency

Mathematical definition of a bias and an unbiased estimator

Mathematical definition for a biased and unbiased estimator

Define standard error

A measure of variation in the sampling distribution of a statistic.

Define standard deviation

A measure of variation in data; equal to the square root of variance in the data.

Suppose set of iid samples of size N from a population with mean μ and variance σ2.

What is the sample mean?

Suppose set of iid samples of size N from a population with mean μ and variance σ2.

What is the variance of the sample mean?

Suppose set of iid samples of size N from a population with mean μ and variance σ2.

What is the standard error?

What is meant by consistency? When is an estimator consistent?

If the sequence of estimates can be mathematically shown to converge in probability to the population parameter, it is ‘consistent’, otherwise it is ‘inconsistent.’

What happens to sampling distribution with larger sample sizes?

It concentrates and the probability that the estimate is arbitrarily close to the population parameter converges to 1.

Hence why we include N in our calculations.